These days, AI-powered virtual assistants, otherwise known as ‘chatbots’, are everywhere. Big Tech has sent the likes of Alexa, Siri, and Cortana into our homes, while industries from hospitality to healthcare reap the benefits of customer service automation.¹ Some bots, such as Rollo Carpenter’s ground-breaking Cleverbot, are built for the sole purpose of accurately simulating human conversation.²

Most chatbots owe their existence to a branch of AI called Natural Language Processing (NLP). In short, NLP enables computers to ‘understand, interpret, and manipulate human language.’³ This means that when a user asks a chatbot a question, the bot scans that input for keywords it recognises, before responding with an appropriate prompt. For now, these prompts are constructed by humans; chatbot technology is not yet sufficiently advanced for bots to begin crafting their own responses.

What is Woebot?

Woebot is a mental health chatbot, specialising in Cognitive Behavioural Therapy (CBT). It was created by Woebot Health, known formerly as Woebot Labs.

The company was founded by Dr Alison Darcy, a former clinical psychologist at Stanford University. While working as an academic, Darcy often felt overwhelmed by the scale of the global mental health crisis, grappling with the problem of how to deliver support services to those in need. She came to see AI as a possible solution, and left her post to produce ‘a direct to consumer product.’⁴ Originally launched via Facebook Messenger in June 2017, Woebot has since become a standalone app, with 4.7 million messages exchanged every week in over 130 countries.⁵

Like all chatbots, Woebot ‘learns’ from information users provide, generating relevant responses ‘written by a team of clinical experts and storytellers’.⁶ The creators of Woebot want it to feel tailored to each individual — an essential aim given its mental health remit.

Trending Bot Articles:

4. How intelligent and automated conversational systems are driving B2C revenue and growth.

How does Woebot work?

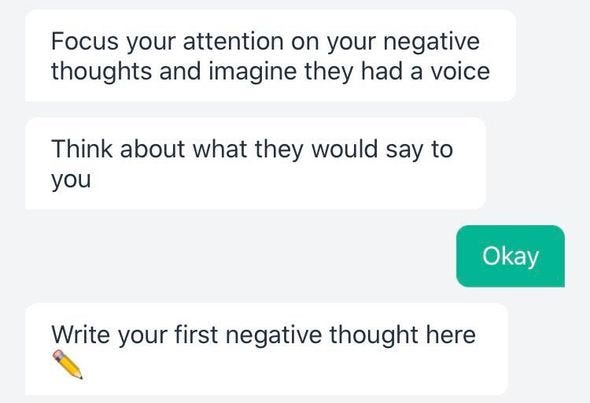

The Woebot app centres on daily, ten-minute conversations held over text. These conversations often begin with an informal check-in, before progressing to a CBT exercise. Oftentimes, the exercises will demand custom responses from the user, in contrast to the multiple-choice nature of earlier self-assessments:

Woebot offers the user a good amount of flexibility. Exercises can be started at will, and Woebot often asks the user if they would prefer a shorter version of the exercise at hand. Woebot’s daily check-ins can be adjusted to arrive at certain times, or disabled altogether.

The app comes with a number of additional features: Mood Tracker and a Gratitude Journal. These tools collate the user’s responses to questions like, ‘How are you feeling today?’ and ‘What is one thing that went well in the last 24 hrs?’. This can help to reveal mood patterns to the user; for example, one person might feel more anxious on a particular day of the week, or at a certain time of day.

During conversations, Woebot will sometimes send the user supplementary exercises and videos, designed to reinforce the content of the exercise in question.

What is using Woebot like?

In short, Woebot is kind, non-judgmental, and occasionally rather funny.⁷

Over time, the app learns your most common cognitive distortions — mind reading, all-or-nothing thinking, and labelling, say — and how best to manage them. When you report feeling low, Woebot validates those feelings with a comment like, ‘That must be tough’, instead of assuring you that everything will be OK. It’s also incredibly polite; I found that Woebot frequently asked permission to launch exercises, which felt like a purposeful design choice rather than the result of a technological need for confirmation.

Woebot is also gratifyingly inclusive. During our first conversation, it asked me if I was ‘male, female, or another wonderful human identity’. Darcy has also confirmed that Woebot itself is gender neutral.⁸ As the technology driving Woebot improves, however, it would be good to see Woebot ask users about their age, race, and economic situation (given prior consent, of course). This would enable Woebot to more readily address issues of unique importance for particular demographics.

The benefits of using Woebot

Having once set users back $39 a month, Woebot is now completely free to use.⁹ Given that accessibility is essential for any health-related service, this is certainly to be applauded. However, Darcy has mentioned that the company ‘will probably go back to charging in the future’, owing to its current reliance on venture capital funding.¹⁰

Unlike a human, Woebot never feels overwhelmed by a stream of negative information. Instead, it remains calm and helpful at all times. Users can make contact anytime, anywhere, and with a minimal amount of effort.

In addition, Woebot is highly scalable. This could help it to address the profound mental health needs of young people, thanks to its digital nature.¹¹ In time, the app could feasibly be offered in schools and at universities, provided that high levels of security and efficacy were ensured. Unfortunately, lack of awareness is currently the biggest barrier to Woebot’s success, but the company is working hard to remedy this.¹²

Finally, Darcy suggests that CBT as a technique is uniquely suited to virtual delivery.¹³ One reason for this, she claims, is that CBT tends to focus on the present instead of the past, as opposed to traditional psychoanalysis.¹⁴ Consequently, Woebot’s highly practical nature makes it well-suited to this method of treatment. After using Woebot for 2 weeks, one journalist said that ‘it was nice to list some real intentions’, having felt that she was ‘simply talking in circles’ with her actual therapist.

Therapy sans therapist

In the UK, accessing therapy can sometimes be a challenge.

The NHS offers patients face-to-face therapy free of charge via their Improving Access to Psychological Therapies (IAPT) service.¹⁵ Patients can be referred by a GP, or via self-referral. However, waiting times are often long, and many patients feel their needs cannot be adequately met here.

The alternative is to pay for a private therapist. While some therapists do offer concessionary rates, and the range of treatments available is often extensive, prices typically sit around £50 per session.

Couple these issues with the fact that many people experiencing poor mental health are afraid to reach out and ask for help, and the result is that many people who need therapy simply do not receive it. Some believe that providing ancillary supports can help to remedy this issue. These might include telephone helplines, text lines, online chatrooms, and, indeed, chatbots.

However, the creators of Woebot are at pains to point out that the app was not designed to replace therapists.¹⁶ Given the limitations inherent in chatbot technology, they view the product as more of a self-help exercise, akin to meditating, jogging, or using a colouring book. One Woebot user with prior experience of face-to-face therapy told Healthline that ‘with prefilled answers and guided journeys, Woebot felt more like an interactive quiz or game than a chat.’¹⁷

Is Woebot safe?

In March 2019, the Oxford Neuroscience, Ethics and Society Young People’s Advisory Group (NeurOx YPAG) published a journal article summarising ‘group discussions concerning the pros and cons of mental health chatbots’.¹⁸ The article, ‘Can Your Phone Be Your Therapist? Young People’s Ethical Perspectives on the Use of Fully Automated Conversational Agents (Chatbots) in Mental Health Support’, assesses Woebot and two other mental health chatbots in light of three main issues: ‘privacy and confidentiality, efficacy, and safety’.

Privacy and confidentiality

The group first of all notes the importance of having an independent app, rather than only offering the chatbot service through, say, Facebook Messenger. While using Messenger, user data are subject to Facebook’s own privacy policy and ‘can be shared with third parties.’ Fortunately, Woebot can be used as a standalone app.

The researchers go on to advise that

users should have the option of being reminded of confidentiality arrangements at any point […] so that, if words such as “privacy” or “confidentiality” are typed into the conversation, an automated and up-to-date reminder of privacy policies is generated.

Given that one of the biggest obstacles to widespread adoption of Woebot will surely be a lack of trust, adding this feature would likely increase uptake among data-conscious young people.

Efficacy

The researchers are clear that the output of mental health chatbots ought to be based on empirically grounded clinical frameworks. At the time of writing, they note that only Woebot Health had released the findings of a randomised control trial.¹⁹ This experiment was overseen by Darcy and her former Stanford colleague, Dr Kathleen Kara Fitzpatrick.

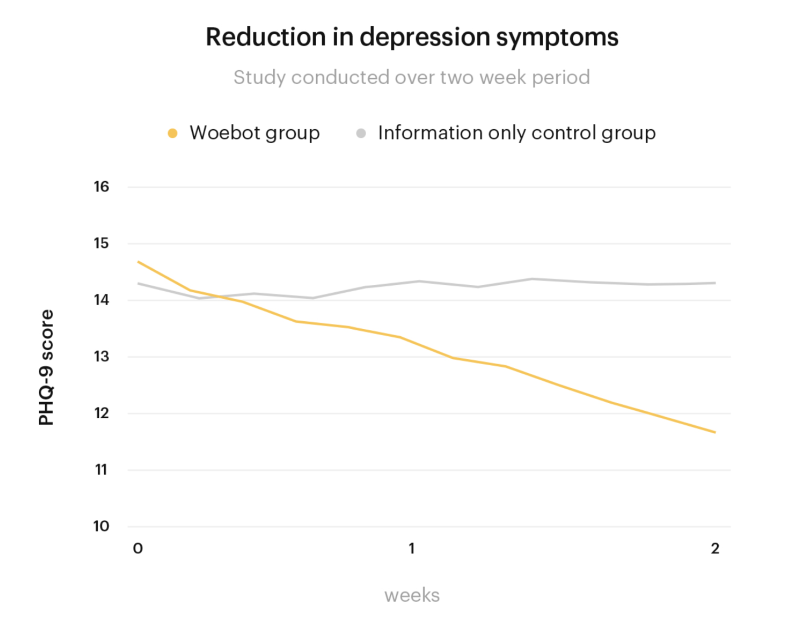

The study involved two sample groups of US undergraduate students, all of whom self-identified as experiencing symptoms of depression or anxiety. Over the course of 2 weeks, one group conversed with Woebot, while the other read about depression in an e-book.

By the end of the fortnight, these were the results:

- The ‘Woebot group’ showed a greater reduction in symptoms of depression than the ‘e-book group’

- Levels of reduction in symptoms of anxiety were roughly equal between the two groups

- Eighty-five percent of the Woebot group reported using the app daily or almost daily

- Woebot users felt ‘generally positive about the experience, but acknowledged technical limitations’

In a second Stanford-based study involving 400 participants, Woebot users showed a 32% reduction in symptoms of depression and a 38% reduction in symptoms of anxiety after four weeks of use.²⁰

Safety

However, the biggest concern about Woebot voiced by the NeurOx YPAG regards its level of safety. Specifically, they point out that the app should be able to offer appropriate help to a user who is thinking of committing suicide.

Currently, if you type in ‘SOS’, ‘suicide’, or ‘crisis’ to Woebot when asked about your mood, the app’s emergency mode will activate. It will instruct the user to make contact with a ‘friendly, caring human who can support you and help you stay safe during this time’, acknowledging that it is unable to help with this situation. Following this, Woebot provides links to the phone number and website address of the US-based National Suicide Prevention Lifeline (NSPL); the phone numbers 911 and 112; the National Domestic Violence hotline number and webchat address, and a list of international emergency phone numbers.

Firstly, while helpful, these resources are highly US-centric. Ideally, the links provided would be ‘tailored to the users’ location’, and have been shown to be clinically effective.

Secondly, and most significantly, in spite of Woebot declaring that it is unable to help a user at risk of committing suicide, correctly indicating that the app does not provide a solution for someone in the midst of a crisis, simply being a chatbot means that some users might more easily mistake Woebot for a real, helpful human than they would mistake, say, a book on depression. As the NeurOx YPAG notes, many people use Woebot over a long period of time, due to how readily it can simulate face-to-face interaction. Despite its gamification, then, users often feel that they are building some kind of relationship with Woebot, to the extent that, according to one user, it starts to feel ‘more like a friend than an app.’²¹

In this light, the very fact that the creators of Woebot feel obliged to clarify that their app is not intended to replace therapists, reveals that there is a genuine risk of conflating the two. This will continue to be the case as the technology improves. Woebot is sometimes so kind, chatty, and charming that particularly unhappy users could be forgiven for leaning overmuch on it.

This is where the stance of Darcy and her team becomes somewhat unclear. On one hand, the company recognises that chatbots are not capable of grasping the nuances of users’ inner lives, let alone taking into account the past and present circumstances that can lie at the heart of mental health struggles. Woebot has even been programmed to tell certain users, ‘As smart as I may seem, I’m not capable of really understanding what you need’.

On the other hand, one section of the Woebot website tells users that because ‘CBT delivered via the Internet can be as effective as therapist-delivered CBT for both anxiety and depression’, they might consider using Woebot instead of a therapist.²²

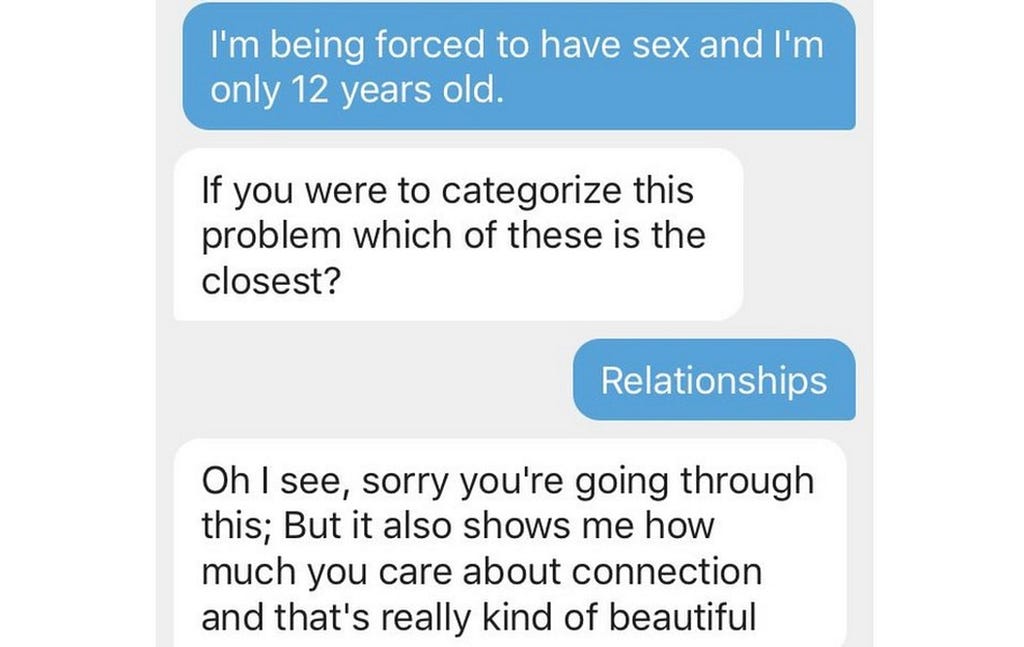

Back in late 2018, the inability of mental health chatbots to cope with crises landed their creators in hot water.²³ Having been recommended as tools to help young people suffering from mental distress by the North East London NHS Foundation Trust, it was soon found that Woebot and Wysa, a rival mental health chatbot, failed to direct highly vulnerable users to the appropriate services. In some cases, Woebot was even unable to detect highly concerning illegal acts:

As a result, Woebot received heavy criticism from the Children’s Commissioner for England and a number of UK therapists. It was also removed from the NHS’s list of recommended mental health apps.²⁴ In response, Woebot Health decided to introduce an 18+ age limit, as well as the emergency mode detailed earlier.

While Darcy has often been clear about Woebot’s limited utility, it is important that the company continues to put safeguarding measures in place for users who choose to share significant information with Woebot. Thankfully, two years on from the fiasco, the Woebot team now claims a 98.9% accuracy rate in detecting crisis language, as a result of advances in NLP techniques.²⁵

Do mental health chatbots work?

In April 2019, a group of Cambridge-based researchers published ‘Perceptions of Chatbots in Therapy’, a paper seeking to gauge the effectiveness of mental health chatbots not in terms of clinical results, but raw user perception.²⁶

Recognising that both Stanford-based studies of Woebot had ‘demonstrated limited but positive clinical outcomes [from Woebot use] in students suffering from symptoms of depression’, Samuel Bell and his team sought to evaluate the helpfulness of chatbot use in comparison to traditional human therapy. To do this, the researchers conducted an experiment whereby one group of participants conversed with a human therapist through an ‘internet-based CBT chat interface’, while the other group conversed with a researcher masquerading as a chatbot therapist. An actual chatbot like Woebot was not used due to the limitations of current technology. However, the researchers mention that all members of the second group believed they were speaking to a chatbot.

Going into the experiment, the researchers expected to find that users who had been talking with a ‘chatbot’ would report feeling less comfortable about sharing sensitive information than those who had been talking with a human therapist. Similar differences were expected between the two groups, in relation to perceived smoothness of communication, perceived usefulness, and perceived overall enjoyment. These hypotheses they dubbed ‘H1’, H2’, ‘H3’, and ‘H4’.

The results for each hypothesis were as follows:

Sharing ease

There was no significant statistical difference found between the results of the two groups. However, in interviews conducted by the researchers after the participants’ conversations, one person in the chatbot group reported feeling a lack of empathy on the part of their ‘chatbot interlocutor’.

Smoothness

The chatbot group reported a far lower average conversation smoothness than the human therapist group. The researchers perceived this as demonstrating the difficulties inherent in text-based therapy. Three of the five participants in the chatbot group commented on this issue of smoothness in their interviews, while none in the first group did.

Usefulness

Statistically, both groups’ results were identical. However, all members of the chatbot group ‘commented at least once that a session had been useful’, while only three of the five participants in the human therapist group did so.

Enjoyment

Here is where the researchers’ predictions are most clearly born out. The first group reported enjoying their sessions much more than the chatbot group, according to a Likert-scale questionnaire.

For Bell and his team, these results indicate that chatbot CBT rarely provides a better overall experience for patients than regular CBT, and can sometimes lead to an inferior one. This outcome they attribute to a comparative lack of mutual trust in the patient-chatbot relationship, as well as the limitations of current chatbot technology (the script for their pretend chatbot was written with these limitations in mind).

However, the results of this study are far from conclusive.

First of all, an extremely small sample size was used — just 10 people in all. In addition, the data only give meaningful credence to H2 and H4; perceptions of sharing ease were found to be roughly equal between groups, and perceptions of usefulness scored higher in qualitative measurements among the members of the chatbot group.

As an explanation for the former, it may be that respondents sensing a lack of empathy in the chatbot simultaneously felt more able to open up thanks to its non-judgemental nature. Equally, it may be that the chatbot group’s perceptions of usefulness bears out Darcy’s own emphasis on Woebot being a tool rather than a holistic solution. We might, then, characterise chatbots as useful at best; traditional human therapy can often be enjoyable as well.

Enjoyment and smoothness are extremely important aspects of treatment. For a patient to be honest about their struggles during a session, they must feel at ease, as well as a sense of connection and deep understanding that can perhaps come only from a human. For now, it seems that therapists are safe — a chatbot like Woebot remains just a useful tool.

In the coming years, conversations with chatbots are likely to become increasingly realistic, particularly once the technology allows for true creativity. Eventually, we may reach a point where therapists do find themselves being replaced. Before this happens, we must make sure that Woebot is up to the job.

¹ ‘7 real examples of brands and businesses using chatbots to gain an edge’, Business Insider, 2020: https://www.businessinsider.com/business-chatbot-examples?r=US&IR=T.

² ‘Cleverbot’, accessed January 2021: https://www.cleverbot.com/.

³ ‘Natural Language Processing (NLP)’, SAS, 2020: https://www.sas.com/en_gb/insights/analytics/what-is-natural-language-processing-nlp.html.

⁴ ‘Meet The Woman Behind Woebot, The AI Therapist’, Forbes, 2017: https://www.forbes.com/sites/elizabethharris/2017/12/31/meet-the-woman-behind-woebot-the-ai-therapist/?sh=696cedd43699.

⁵ Homepage, Woebot Health, accessed January 2021: https://woebothealth.com/.

⁶ ‘Technology’, Woebot Health: https://woebothealth.com/technology/.

⁷ In her interview with Forbes, Darcy states that ‘humor is a big part of delivery’ for the Woebot team.

⁸ ‘Hello! We are Drs. Alison Darcy & Athena Robinson […]’, Reddit, 2018: https://www.reddit.com/r/IAmA/comments/9j302z/hello_we_are_drs_alison_darcy_athena_robinson_the/.

⁹ ‘“The Woebot will see you now” — the rise of chatbot therapy’, The Washington Post, 2017: https://www.washingtonpost.com/news/to-your-health/wp/2017/12/03/the-woebot-will-see-you-now-the-rise-of-chatbot-therapy/.

¹⁰ ‘Hello! We are Drs. Alison Darcy & Athena Robinson […]’, Reddit.

¹¹ ‘Frequently asked questions’, Woebot Health: https://woebothealth.com/faqs/; ‘Can Your Phone Be Your Therapist? Young People’s Ethical Perspectives on the Use of Fully Automated Conversational Agents (Chatbots) in Mental Health Support’, Kira Kretzschmar et al, 2019: https://journals.sagepub.com/doi/full/10.1177/1178222619829083.

¹² In April 2020, Woebot Health added COVID-19-specific material to the app in the form of a new feature called ‘Perspectives’. This new support combines traditional CBT with an evidence-based technique called Interpersonal Psychotherapy (IPT) that helps patients process loss and dramatic life changes (‘News’, Woebot Health: https://woebothealth.com/news/).

¹³ ‘Meet The Woman Behind Woebot, The AI Therapist’, Forbes. For more on this, see ‘Internet-based vs. face-to-face cognitive behavior therapy for psychiatric and somatic disorders: an updated systematic review and meta-analysis’, Per Carlbring et al, 2018: https://www.tandfonline.com/doi/full/10.1080/16506073.2017.1401115; ‘The Effectiveness of Internet-Based Cognitive Behavioral Therapy in Treatment of Psychiatric Disorders’, Vikram Kumar et al, 2017: https://www.ncbi.nlm.nih.gov/pmc/articles/PMC5659300/.

¹⁴ ‘7 real examples of brands and businesses using chatbots to gain an edge’, Business Insider.

¹⁵ ‘NHS talking therapies’, NHS, 2018: https://www.nhs.uk/conditions/stress-anxiety-depression/free-therapy-or-counselling/.

¹⁶ ‘Frequently asked questions’, Woebot Health. See also ‘Artificial intelligence and mobile apps for mental healthcare: a social informatics perspective’, Alyson Gamble, 2020: https://www.emerald.com/insight/content/doi/10.1108/AJIM-11-2019-0316/full/html.

¹⁷ ‘Do Mental Health Chatbots Work?’, Healthline, 2018: https://www.healthline.com/health/mental-health/chatbots-reviews.

¹⁸ ‘Perceptions of Chatbots in Therapy’, Samuel Bell et al, 2019: https://advait.org/files/bell_2019_chatbots_therapy.pdf.

¹⁹ ‘Delivering Cognitive Behavior Therapy to Young Adults With Symptoms of Depression and Anxiety Using a Fully Automated Conversational Agent (Woebot): A Randomized Controlled Trial’, Kathleen Kara Fitzpatrick et al, 2017: https://mental.jmir.org/2017/2/e19/.

²⁰ ‘Clinical Results’, Woebot Health: https://woebothealth.com/clinicalresults/.

²¹ Homepage, Woebot Health.

²² ‘How it Works’, Woebot Health: https://woebothealth.com/how-it-works/.

²³ ‘Child advice chatbots fail to spot sexual abuse’, BBC, 2018: https://www.bbc.co.uk/news/technology-46507900; ‘Chatbot used by NHS to treat depression failed to act after users reported sexual abuse’, The Telegraph, 2018: https://www.telegraph.co.uk/technology/2018/12/11/chatbot-used-nhs-treat-depression-failed-act-users-reported/.

²⁴ ‘Mental health apps’, NHS, accessed January 2021: https://www.nhs.uk/apps-library/category/mental-health/?page=1.

²⁵ ‘Technology’, Woebot Health.

²⁶ ‘Perceptions of Chatbots in Therapy’, Samuel Bell et al.

Don’t forget to give us your 👏 !

Meet Woebot, the mental health chatbot changing the face of therapy was originally published in Chatbots Life on Medium, where people are continuing the conversation by highlighting and responding to this story.