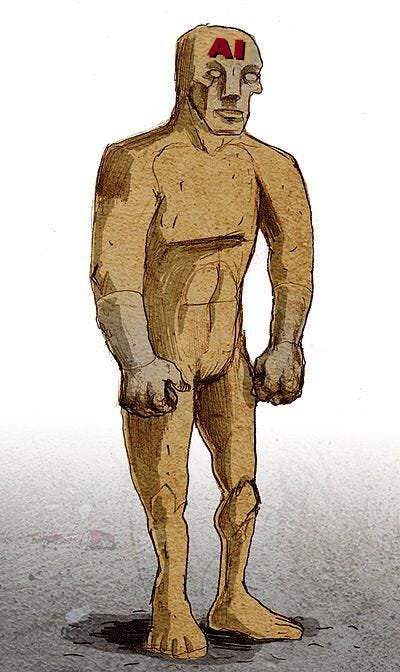

golem: [noun] an artificial human being in Hebrew folklore endowed with life. — Merriam-Webster

According to legend, a golem was animated by instructions applied to its forehead and could be deactivated when those instructions were removed. The golem had no ability to think or decide. It could only carry out orders. This creature is usually brought to life through magical rituals or procedures and is limited to obeying any order of its creator in a literal way.

Like a well-designed chatbot, this modern golem simulates life until you present it with information it cannot handle, and then you encounter the implacable unreasonableness of a system that only mimics life but does not demonstrate it.

When an AI system is presented with data it is programmed to expect, it gives the impression it is capable of making intelligent decisions. When the data you are presenting to the system falls outside the limits of what it is programmed for, the results cannot be predicted and the system response fails its purpose.

Putting such a golem in charge of your life, as in an automobile or financial transactions, is extreme folly, which is why all AI systems interfacing with human beings should provide an override to access human support.

The trap we can fall into is that these AI designs are incredibly efficient when presented with inputs limited to what they are designed for. This lulls the inexperienced designer to assume they have covered all possible cases and not provide a means to override the design in case of failure.

This means that a poorly designed AI system handling human problems acts like a wood chipper which does not distinguish between human hands and the wood it is designed to chip. Fortunately, wood chippers have a manual override. Some AI systems do not.

With AI Designs, We Can Create Modern Day Golems was originally published in Chatbots Life on Medium, where people are continuing the conversation by highlighting and responding to this story.